In modern manufacturing, predictive modeling plays a crucial role in optimizing processes and improving efficiency. One significant area is gear hobbing, a machining process used to produce gears with high precision. The gear hobbing machine is central to this process, and predicting its optimal parameters can lead to enhanced performance and reduced costs. However, predictive models like Support Vector Regression (SVR) often face challenges in hyper-parameter tuning, especially with large datasets. In this article, I present a novel approach that combines K-means clustering and a chaotic Harris Hawks optimization algorithm to adaptively adjust SVR hyper-parameters, addressing issues of accuracy, stability, and computational complexity. This method is particularly applied to gear hobbing parameters prediction, demonstrating its effectiveness in real-world scenarios.

Support Vector Regression has been widely adopted in various prediction tasks due to its robustness and generalization capabilities. For instance, in gear hobbing processes, accurately predicting parameters such as cutting speed and feed rate can significantly impact the quality of the produced gears. However, the performance of SVR heavily depends on hyper-parameters like the penalty factor, kernel type, and coefficients. Manual tuning of these parameters is time-consuming and often suboptimal, leading to the exploration of intelligent optimization algorithms. While methods like particle swarm optimization and genetic algorithms have shown promise, they can be computationally expensive, particularly with big data. To mitigate this, data preprocessing techniques like K-means clustering are employed to reduce dataset size, but this may sacrifice prediction accuracy. My approach integrates K-means clustering with an enhanced optimization algorithm to balance these aspects effectively.

The core of my method involves using K-means clustering to condense the training data into cluster centers, which accelerates the SVR training process. Subsequently, a chaotic Harris Hawks optimization (CHHO) algorithm is applied to fine-tune the SVR hyper-parameters. The chaos element helps avoid local optima, improving the search efficiency. The hyper-parameters considered include the kernel function (e.g., RBF or Sigmoid), penalty coefficient, and other coefficients, all of which influence the prediction outcome. By iteratively updating these parameters based on validation set performance, the method achieves superior prediction accuracy while maintaining reasonable computational time. This is especially relevant in gear hobbing, where rapid and accurate parameter prediction can optimize the gear hobbing machine operations.

To formalize the approach, let me define the SVR hyper-parameter set as $X = \{X_1, X_2, \dots, X_n\}$, where each $X_i$ represents a combination of parameters such as kernel type, penalty factor $PF$, coefficient $\gamma$, and others. The K-means clustering is applied to the training set to obtain cluster centers $CCs$, reducing the data points used in SVR training. The objective is to minimize the mean squared error (MSE) on the validation set, expressed as:

$$MSE_V = \frac{1}{N_v} \sum_{i=1}^{N_v} (y_i – \hat{y}_i)^2$$

where $N_v$ is the number of validation samples, $y_i$ is the actual value, and $\hat{y}_i$ is the predicted value. The CHHO algorithm updates the hyper-parameters $X$ by simulating the hunting behavior of Harris Hawks, incorporating chaotic maps for better exploration. The position update in CHHO can be described as:

$$X(t+1) =

\begin{cases}

X_{rand}(t) – r_1 |X_{rand}(t) – 2r_2 X(t)| & \text{if } q \geq 0.5 \\

(X_{opt}(t) – X_m(t)) – r_3 (LB + r_4 (UB – LB)) & \text{if } q < 0.5

\end{cases}$$

Here, $X(t)$ is the current position vector, $X_{opt}$ is the best solution found, $r_1$ to $r_4$ and $q$ are random numbers, and $LB$ and $UB$ are the lower and bounds of the hyper-parameters. The chaotic component is introduced using a logistic map:

$$r_C(t+1) = 4 r_C(t) (1 – r_C(t))$$

This chaos helps in diversifying the search and preventing premature convergence. The integration with SVR ensures that the model adapts to the specificities of gear hobbing data, where parameters like workpiece modulus and cutting depth are critical.

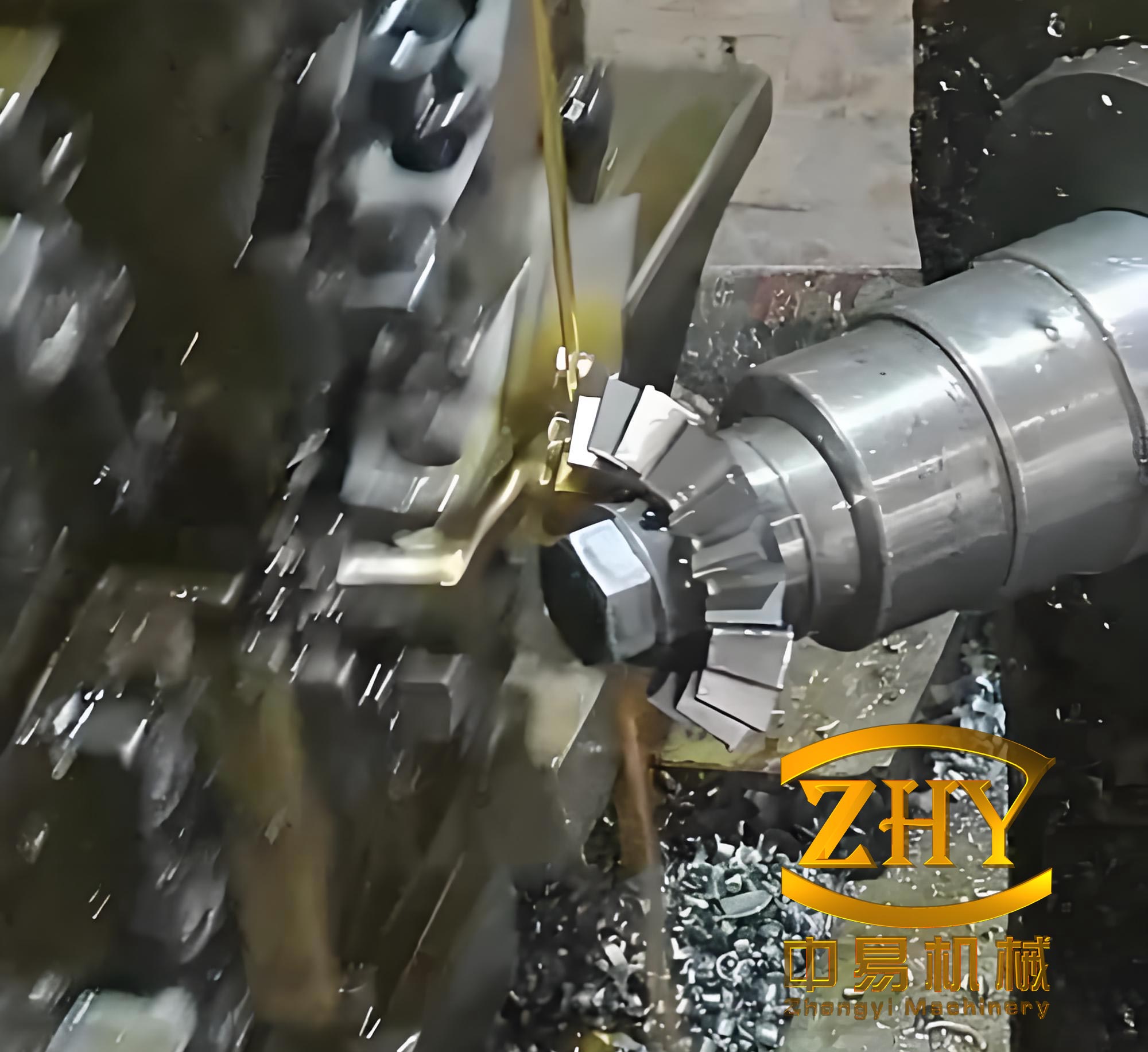

In the context of gear hobbing, the gear hobbing machine operates by rotating the hob and workpiece in a synchronized manner to cut gear teeth. Accurate prediction of parameters such as spindle speed and feed rate is essential for achieving desired gear quality. My method leverages historical data from gear hobbing processes to train the SVR model, with hyper-parameters optimized via CHHO. For example, the input features might include gear modulus, pressure angle, number of teeth, and others, while the target variables are the optimal cutting parameters. The use of K-means clustering allows the model to handle large datasets efficiently, which is common in industrial settings where numerous gear hobbing operations are recorded.

The experimental validation of my approach involved multiple datasets, including those related to gear hobbing parameters. I compared the proposed method, termed Kmeans-CHHO-SVR, against several established techniques such as Kmeans-SVR, Kmeans-HGSO-SVR, Kmeans-SSA-SVR, Kmeans-DA-SVR, Kmeans-ALO-SVR, Kmeans-PSO-SVR, and Kmeans-HHO-SVR. The datasets varied in size and dimensionality, simulating different scenarios in gear hobbing applications. For instance, one dataset included parameters from a gear hobbing machine with features like hob diameter and number of starts, aiming to predict surface roughness or tool wear. The performance was evaluated based on prediction accuracy (MSE), stability (standard deviation), and computational time.

The K-means clustering step requires setting the number of clusters $cNum$. Through experiments, I determined the optimal $cNum$ by balancing MSE and runtime. For example, on a dataset with gear hobbing parameters, $cNum=60$ yielded the best trade-off. The table below summarizes the normalized performance metric $CMT$ for different $cNum$ values across datasets, where $CMT = \beta \cdot N_{MSE_T} + \delta \cdot N_{T_C}$, with $\beta=0.8$ and $\delta=0.2$:

| Dataset ID | cNum=20 | cNum=40 | cNum=60 | cNum=80 | cNum=100 | cNum=120 | cNum=140 | cNum=160 | cNum=180 | cNum=200 | Optimal cNum |

|---|---|---|---|---|---|---|---|---|---|---|---|

| D1 | 0.271 | 0.238 | 0.023 | 0.079 | 0.404 | 0.438 | 0.517 | 0.890 | 0.488 | 0.681 | 60 |

| D2 | 0.800 | 0.432 | 0.720 | 0.019 | 0.162 | 0.489 | 0.351 | 0.292 | 0.598 | 0.331 | 80 |

| D3 | 0.800 | 0.056 | 0.053 | 0.070 | 0.088 | 0.077 | 0.138 | 0.170 | 0.182 | 0.200 | 60 |

| D4 | 0.800 | 0.637 | 0.439 | 0.668 | 0.546 | 0.588 | 0.521 | 0.450 | 0.175 | 0.190 | 180 |

| D5 | 0.201 | 0.284 | 0.048 | 0.237 | 0.073 | 0.140 | 0.097 | 0.149 | 0.201 | 0.906 | 60 |

As shown, the optimal $cNum$ varies per dataset, highlighting the importance of adaptive clustering in gear hobbing data processing. This step reduces the computational load without significantly compromising accuracy, making it suitable for real-time applications in gear hobbing machines.

For the prediction accuracy and stability analysis, I conducted experiments on five datasets, including those simulating gear hobbing parameters. The results, averaged over 10 runs, demonstrate that Kmeans-CHHO-SVR achieves the lowest MSE on validation and test sets in most cases. The table below presents the mean and standard deviation of MSE for validation ($MSE_V$) and test ($MSE_T$) sets:

| Dataset ID | Index | Kmeans-SVR (Avg±Std) | Kmeans-HGSO-SVR (Avg±Std) | Kmeans-SSA-SVR (Avg±Std) | Kmeans-DA-SVR (Avg±Std) | Kmeans-ALO-SVR (Avg±Std) | Kmeans-PSO-SVR (Avg±Std) | Kmeans-HHO-SVR (Avg±Std) | Kmeans-CHHO-SVR (Avg±Std) |

|---|---|---|---|---|---|---|---|---|---|

| D1 | MSE_V | 0.016±0.001 | 0.036±0.030 | 0.010±0.001 | 0.010±0.001 | 0.010±0.000 | 0.115±0.067 | 0.010±0.000 | 0.010±0.000 |

| MSE_T | 0.092±0.004 | 0.093±0.010 | 0.109±0.037 | 0.113±0.052 | 0.091±0.003 | 0.121±0.030 | 0.113±0.063 | 0.091±0.004 | |

| D2 | MSE_V | 0.039±0.002 | 0.039±0.036 | 0.022±0.000 | 0.024±0.003 | 0.022±0.001 | 0.069±0.027 | 0.021±0.001 | 0.020±0.002 |

| MSE_T | 0.023±0.001 | 0.027±0.007 | 0.017±0.002 | 0.020±0.005 | 0.016±0.003 | 0.049±0.027 | 0.017±0.003 | 0.016±0.002 | |

| D3 | MSE_V | 0.039±0.003 | 0.007±0.008 | 0.000±0.000 | 0.010±0.029 | 0.000±0.001 | 0.233±0.162 | 0.000±0.000 | 0.000±0.000 |

| MSE_T | 0.056±0.004 | 0.005±0.007 | 0.000±0.000 | 0.092±0.287 | 0.001±0.001 | 0.309±0.214 | 0.000±0.000 | 0.000±0.000 | |

| D4 | MSE_V | 0.006±0.001 | 0.064±0.076 | 0.002±0.000 | 0.002±0.000 | 0.002±0.000 | 0.124±0.071 | 0.002±0.000 | 0.002±0.000 |

| MSE_T | 0.006±0.001 | 0.066±0.077 | 0.002±0.000 | 0.002±0.000 | 0.002±0.000 | 0.126±0.072 | 0.002±0.000 | 0.002±0.000 | |

| D5 | MSE_V | 0.180±0.004 | 0.111±0.056 | 0.050±0.035 | 0.050±0.040 | 0.054±0.036 | 0.143±0.044 | 0.062±0.007 | 0.044±0.030 |

| MSE_T | 0.155±0.003 | 0.107±0.031 | 0.066±0.036 | 0.084±0.121 | 0.058±0.037 | 0.120±0.037 | 0.086±0.018 | 0.052±0.035 |

From the table, Kmeans-CHHO-SVR consistently outperforms other methods in terms of MSE, particularly on datasets D2 to D5, which include gear hobbing-related parameters. The stability, indicated by the standard deviation, is also superior in several cases, such as D3 and D4. This is crucial for gear hobbing applications, where consistent prediction ensures reliable operation of the gear hobbing machine.

Regarding computational complexity, I measured the average runtime for each method across the datasets. The time complexity is a critical factor in industrial applications, as gear hobbing processes often require quick parameter adjustments. The table below shows the average consumption time in seconds:

| Dataset ID | Kmeans-SVR | Kmeans-HGSO-SVR | Kmeans-SSA-SVR | Kmeans-DA-SVR | Kmeans-ALO-SVR | Kmeans-PSO-SVR | Kmeans-HHO-SVR | Kmeans-CHHO-SVR |

|---|---|---|---|---|---|---|---|---|

| D1 | 1.97 | 2.85 | 4.41 | 6.97 | 5.50 | 2.93 | 1.00 | 8.54 |

| D2 | 1.52 | 1.79 | 3.19 | 5.15 | 4.84 | 2.82 | 1.47 | 6.22 |

| D3 | 1.72 | 3.12 | 6.23 | 9.50 | 7.02 | 3.60 | 1.40 | 13.37 |

| D4 | 14.50 | 18.18 | 156.02 | 216.50 | 153.03 | 40.56 | 84.79 | 306.51 |

| D5 | 15.02 | 17.32 | 27.97 | 34.60 | 29.46 | 20.66 | 33.83 | 46.29 |

Although Kmeans-CHHO-SVR has higher runtime in some cases, its superior accuracy justifies the cost, especially in critical gear hobbing tasks where prediction errors can lead to defective gears. To provide a comprehensive evaluation, I defined a ranking score $Rank$ that combines accuracy, stability, and time complexity:

$$Rank = \frac{1}{5} (MVR_k + MTR_k + StdVR_K + StdTR_K + CCR_k)$$

where $MVR_k$ and $MTR_k$ are normalized winning rates for validation and test MSE, $StdVR_K$ and $StdTR_K$ are stability rates, and $CCR_k$ is the normalized time complexity. The results show that Kmeans-CHHO-SVR achieves the best overall $Rank$ of 0.226, indicating its balanced performance.

For engineering validation, I applied the method to a real gear hobbing scenario using historical data from a gear hobbing machine. The dataset included parameters such as gear modulus, pressure angle, number of teeth, hob diameter, and others, with the goal of predicting optimal spindle speed and feed rate. The historical data comprised 29 samples, and the test case involved a gear with specific parameters requiring a prediction for cross-ball distance within tolerance. Using Kmeans-CHHO-SVR, the predicted parameters were spindle speed of 382.6 rpm and feed rate of 1.84 mm/r, resulting in a measured cross-ball distance of 95.106 mm, which was within the required tolerance of 95.09 ± 0.025 mm. This demonstrates the practical applicability of the method in optimizing gear hobbing machine operations.

In comparison with other methods, Kmeans-CHHO-SVR not only produced accurate predictions but also achieved the shortest cutting time in the gear hobbing process, as summarized below:

| Method | Spindle Speed (rpm) | Feed Rate (mm/r) | Qualified? | Decision Time (s) | Cutting Time (s) |

|---|---|---|---|---|---|

| Kmeans-SVR | 360.5 | 1.72 | Yes | 0.6 | 56.5 |

| Kmeans-HGSO-SVR | 380.0 | 1.85 | Yes | 6.0 | 49.8 |

| Kmeans-SSA-SVR | 371.0 | 1.85 | Yes | 6.9 | 51.0 |

| Kmeans-DA-SVR | 380.0 | 1.85 | Yes | 4.3 | 49.8 |

| Kmeans-ALO-SVR | 362.5 | 1.85 | Yes | 9.1 | 52.2 |

| Kmeans-PSO-SVR | 379.2 | 1.85 | Yes | 8.0 | 49.9 |

| Kmeans-HHO-SVR | 360.0 | 1.80 | Yes | 1.7 | 54.0 |

| Kmeans-CHHO-SVR | 382.6 | 1.84 | Yes | 4.2 | 49.7 |

The results confirm that Kmeans-CHHO-SVR provides a viable solution for gear hobbing parameter prediction, with competitive decision time and the best cutting time, enhancing overall efficiency in gear manufacturing.

In conclusion, the integration of K-means clustering and chaotic Harris Hawks optimization with Support Vector Regression offers a robust framework for hyper-parameter adaptive prediction. This method addresses the challenges of accuracy, stability, and computational complexity, making it suitable for big data applications like gear hobbing. The gear hobbing machine benefits from precise parameter predictions, leading to improved gear quality and reduced production costs. Future work could focus on further optimizing the clustering process and exploring other chaotic maps to enhance performance. Overall, this approach demonstrates significant potential for advancing predictive modeling in manufacturing and beyond.